This is not a story about jobs disappearing. It is a story about systems quietly moving the thinking out of people and into the machine — then rewarding everyone for pretending nothing changed.

The phenomenon has a name in the research literature: AI-induced deskilling — the measurable erosion of professional skills when humans over-rely on AI for tasks that once demanded active judgment, synthesis, and practice. In medicine alone, studies document clinicians losing proficiency in adenoma detection during colonoscopies after habitual AI assistance, poorer clinical judgment, reduced physical examination accuracy, and even diminished communication with patients. Trainees face “upskilling inhibition,” where the chance to build foundational expertise is crowded out before it can form. The pattern appears across domains: navigation apps dull spatial reasoning, legal analytics risk moral complacency, and generative tools in knowledge work quietly compress critical evaluation.

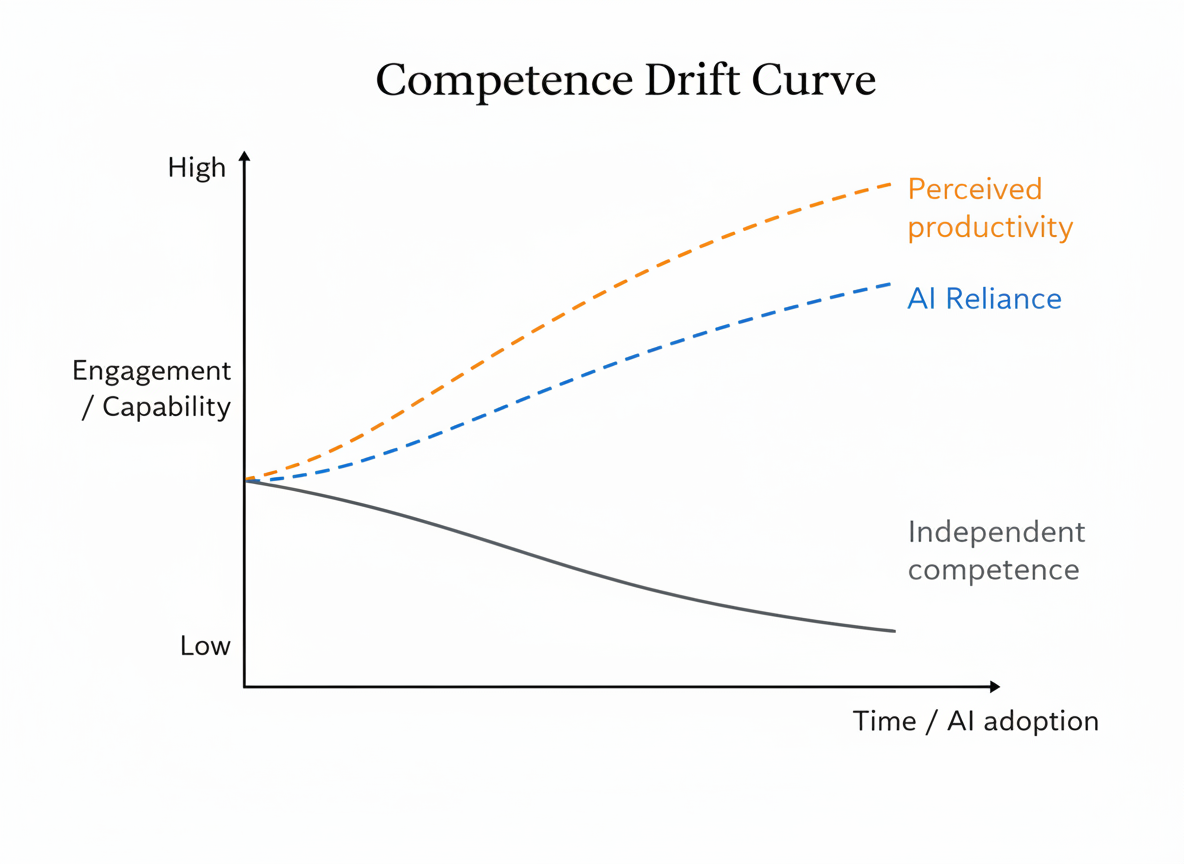

We call the broader, subtler mechanism behind this in everyday knowledge workflows competence drift: the gradual relocation of cognitive responsibility to AI, followed by the slow atrophy of the very judgment needed to oversee and correct it. Every day, professionals delegate synthesis to copilots, reasoning to large language models, and interpretation to quick validation loops. Each delegation feels efficient. Together they rewrite how competence is built, exercised, and sustained. Speed wins. Depth becomes optional.

Automation once replaced routine muscle. AI replaces the productive friction that forges expertise. Organizations gain faster outputs while individuals quietly lose the mental models that allow them to recognize when the machine hallucinates, oversimplifies, or misses context.

Data makes the pattern concrete. Faculty surveys show that 95% expect generative AI to drive student overreliance and 90% predict declines in critical thinking. Brain-imaging studies at MIT reveal ChatGPT users with the lowest cortical engagement, growing more passive over repeated tasks. GitHub Copilot delivers 55% faster task completion, yet manual coding effort and exploratory search behavior shrink, starving the practice that builds deep understanding. Macro outcomes remain ambiguous: many organizations report limited measurable productivity gains despite heavy investment, suggesting that visible efficiency may coexist with hidden capability compression.

The incentives align perfectly for drift. Companies reward visible throughput while AI removes the visible cost of shallow work. Delegation becomes the rational individual choice and the collectively risky outcome. Judgment relocates — upstream into prompt design and downstream into validation — but oversight rituals and governance rarely evolve alongside this shift. Systems grow more dependent on human discernment while giving individuals fewer opportunities to practice it deliberately.

The process remains invisible because outputs still appear acceptable. Confidence in independent analysis slips gradually, tolerance for uncertainty declines, and mental models weaken without immediate failure. Dashboards celebrate efficiency while underlying capability compresses.

This is not individual laziness; it is structural. Incentives reward adoption as progress while keeping oversight informal. Drift normalizes. The quiet cost hides inside metrics optimized for volume, surfacing later in decision opacity, accountability gaps, and failures misattributed to “AI error” when they are more accurately governance breakdowns following competence erosion.

AI delivers undeniable leverage: faster iteration, broader exploration, and lower friction in routine cognition. That is not in question. The unmeasured dimension is the reshaping of how humans learn, interrogate, and own outcomes in AI-mediated environments. Responsibility remains irreducibly human even as the practiced capacity to meet it erodes.

Avoiding AI-induced deskilling and competence drift does not require rejecting tools. It requires resisting the quiet slide toward treating them as the default cognitive mode. Individuals and teams that deliberately alternate assisted and unassisted work, periodically reconstruct reasoning from first principles, treat AI outputs as strong hypotheses rather than settled conclusions, and preserve visible friction where deep understanding is the objective maintain the capability muscle that drift gradually weakens.

Competence lives through practice rather than passive review. Validation cannot substitute for synthesis, and plausibility checks cannot build durable mental models. Systems that preserve expertise will treat the human-AI loop as intentional rather than automatic, using automation to amplify capability without dissolving the conditions under which it forms.

Competence drift and AI-induced deskilling are not arguments against progress. They are signals of incomplete adaptation. AI expands reach while simultaneously reshaping how individuals learn to think within mediated loops. Those who remain attentive to both dynamics — expansion and erosion — retain agency as creators rather than defaulting to passive supervision of automated cognition. Everything else risks becoming polished optics masking a gradual redistribution of competence away from the humans ultimately responsible for decisions.